This blog post explains how I used serverless functions to automate release candidate verification for the Apache OpenWhisk project.

Automating this process has the following benefits…

- Removes the chance of human errors compared to the previously manual validation process.

- Allows me to validate new releases without access to my dev machine.

- Usable by all committers by hosting as an external serverless web app.

Automating release candidate validation makes it easier for project committers to participate in release voting. This should make it faster to get necessary release votes, allowing us to ship new versions sooner!

background

apache software foundation

The Apache Software Foundation has a well-established release process for delivering new product releases from projects belonging to the foundation. According to their documentation…

An Apache release is a set of valid & signed artifacts, voted on by the appropriate PMC and distributed on the ASF’s official release infrastructure.

Releasing a new software version requires the release manager to create a release candidate from the project source files. Source archives must be cryptographically signed by the release manager. All source archives for the release must be comply with strict criteria to be considered valid release candidates. This includes (but is not limited to) the following requirements:

- Checksums and PGP signatures for source archives are valid.

- LICENSE, NOTICE and DISCLAIMER files included and correct.

- All source files have license headers.

- No compiled archives bundled in source archives.

Release candidates can then be proposed on the project mailing list for review by members of the Project Management Committee (PMC). PMC members are eligible to vote on all release candidates. Before casting their votes, PMC members are required to check release candidate meets the requirements above.

If a minimum of three positive votes is cast (with more positive than negative votes), the release passes! The release manager can then move the release candidate archives to the release directory.

apache openwhisk releases

As a committer and PMC member on the Apache OpenWhisk project, I’m eligible to vote on new releases.

Apache OpenWhisk (currently) has 52 separate source repositories under the project on GitHub. With a fast-moving open-source project, new releases candidate are constantly being proposed, which all require the necessary number of binding PMC votes to pass.

Manually validating release candidates can be a time-consuming process. This can make it challenging to get a quorum of binding votes from PMC members for the release to pass. I started thinking how I could improve my productivity around the validation process, enabling me to participate in more votes.

Would it be possible to automate some (or all) of the steps in release candidate verification? Could we even use a serverless application to do this?

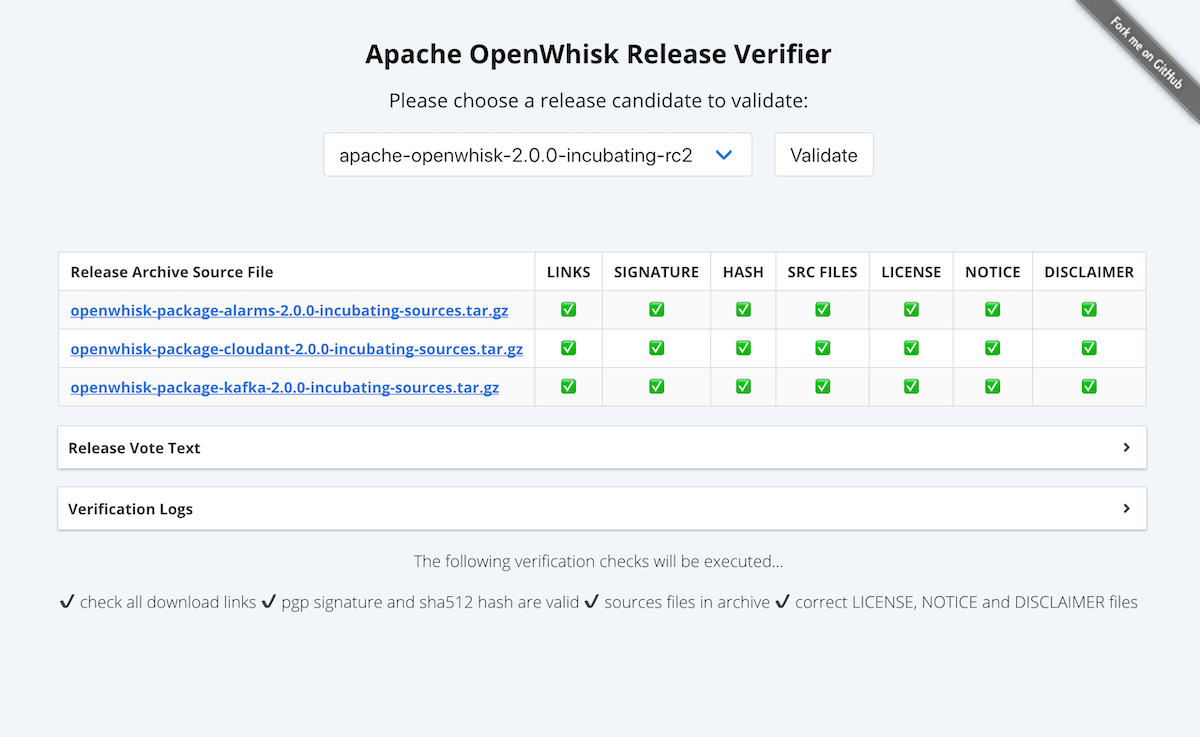

apache openwhisk release verifier

Spoiler Alert: YES! I ended up building a serverless application to do this for me.

It is available at https://apache.jamesthom.as/

Source code for this project is available here.

IBM Cloud Functions is used to run the serverless backend for the web application. This means Apache OpenWhisk is being used to validate future releases of itself… which is awesome.

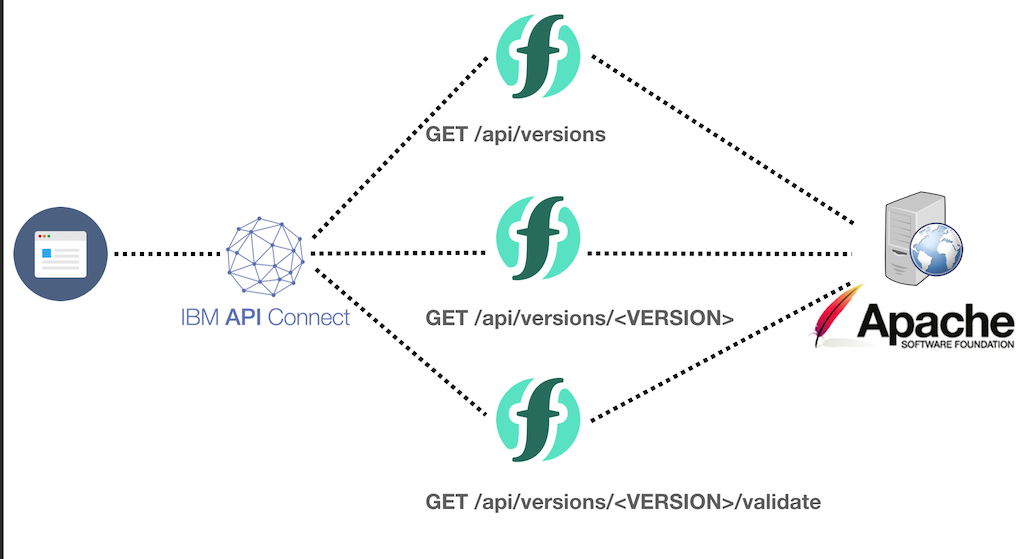

architecture

HTML, JS and CSS files are served by Github Pages from the project repository.

Backend APIs are Apache OpenWhisk actions running on IBM Cloud Functions.

Both the front-page and API are served from a custom sub-domains of my personal domain.

available release candidates

When the user loads the page, the drop-down list needs to contain the current list of release candidates from the ASF development distribution site.

This information is available to the web page via the https://apache-api.jamesthom.as/api/versions endpoint. The serverless function powering this API parses that live HTML page (extracting the current list of release candidates) each time it is invoked.

$ http get https://apache-api.jamesthom.as/api/versions

HTTP/1.1 200 OK

...

{

"versions": [

"apache-openwhisk-0.11.0-incubating-rc1",

"apache-openwhisk-0.11.0-incubating-rc2",

"apache-openwhisk-1.13.0-incubating-rc1",

"apache-openwhisk-1.13.0-incubating-rc2",

"apache-openwhisk-2.0.0-incubating-rc2",

"apache-openwhisk-3.19.0-incubating-rc1"

]

}

release candidate version info

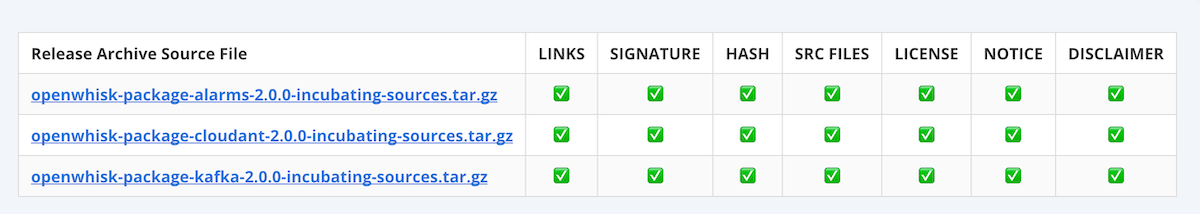

Release candidates may have multiple source archives being distributed in that release. Validation steps need to be executed for each of those archives within the release candidate.

Once a user has selected a release candidate version, source archives to validate are shown in the table. This data is available from the https://apache-api.jamesthom.as/api/versions/VERSION endpoint. This information is parsed from the HTML page on the ASF site.

$ http get https://apache-api.jamesthom.as/api/versions/apache-openwhisk-2.0.0-incubating-rc2

HTTP/1.1 200 OK

...

{

"files": [

"openwhisk-package-alarms-2.0.0-incubating-sources.tar.gz",

"openwhisk-package-cloudant-2.0.0-incubating-sources.tar.gz",

"openwhisk-package-kafka-2.0.0-incubating-sources.tar.gz"

]

}

release verification

Having selected a release candidate version, clicking the “Validate” button will start validation process. Triggering the https://apache-api.jamesthom.as/api/versions/VERSION/validate endpoint will run the serverless function used to execute the validation steps.

This serverless function will carry out the following verification steps…

checking download links

All the source archives for a release candidate are downloaded to temporary storage in the runtime environment. The function also downloads the associated SHA512 and PGP signature files for comparison. Multiple readable streams can be created from the same file path to allow the verification steps to happen in parallel, rather than having to re-download the archive for each task.

checking SHA512 hash values

SHA512 sums are distributed in a text file containing hex strings with the hash value.

openwhisk-package-alarms-2.0.0-incubating-sources.tar.gz:

3BF87306 D424955B B1B2813C 204CC086 6D27FA11 075F0B30 75F67782 5A0198F8 091E7D07

B7357A54 A72B2552 E9F8D097 50090E9F A0C7DBD1 D4424B05 B59EE44E

The serverless function needs to dynamically compute the hash for the source archive and compare the hex bytes against the text file contents. Node.js comes with a built-in crypto library making it easy to create hash values from input streams.

This is the function used to compute and compare the hash values.

const hash = async (file_stream, hash_file, name) => {

return new Promise((resolve, reject) => {

const sha512 = parse_hash_from_file(hash_file)

const hmac = crypto.createHash('sha512')

file_stream.pipe(hmac)

hmac.on('readable', () => {

const stream_hash = hmac.read().toString('hex')

const valid = stream_hash === sha512.signature

logger.log(`file (${name}) calculated hash: ${stream_hash}`)

logger.log(`file (${name}) hash from file: ${sha512.signature}`)

resolve({valid})

})

hmac.on('error', err => reject(err))

})

}

validating PGP signatures

Node.js’ crypto library does not support validating PGP signatures.

I’ve used the OpenPGP.js library to handle this task. This is a Javascript implementation of the OpenPGP protocol (and the most popular PGP library for Node.js). Three input values are needed to validate PGP messages.

- Message contents to check.

- PGP signature for the message.

- Public key for the private key used to sign the release.

The “message” to check is the source archive. PGP signatures come from the .asc files located in the release candidate directory.

-----BEGIN PGP SIGNATURE-----

Version: GnuPG v1

iQIcBAABAgAGBQJcpO0FAAoJEHKvDMIsTPMgf0kP+wbtJ1ONZJQKjyDVx8uASMDQ

...

-----END PGP SIGNATURE-----

Public keys used to sign releases are stored in the root folder of the release directory for that project.

This function is used to implement the signature checking process.

const signature = async (file_stream, signature, public_keys, name) => {

const options = {

message: openpgp.message.fromBinary(file_stream),

signature: await openpgp.signature.readArmored(signature),

publicKeys: (await openpgp.key.readArmored(public_keys)).keys

}

const verified = await openpgp.verify(options)

await openpgp.stream.readToEnd(verified.data)

const valid = await verified.signatures[0].verified

return { valid }

}

scanning archive files

Using the node-tar library, downloaded source archives are extracted into the local runtime to allow scanning of individual files.

LICENSE.txt, DISCLAIMER.txt and NOTICE.txt files are checked to ensure correctness. An external NPM library is used to check all files in the archive for binary contents. The code also scans for directory names that might contain third party libraries (node_modules or .gradle).

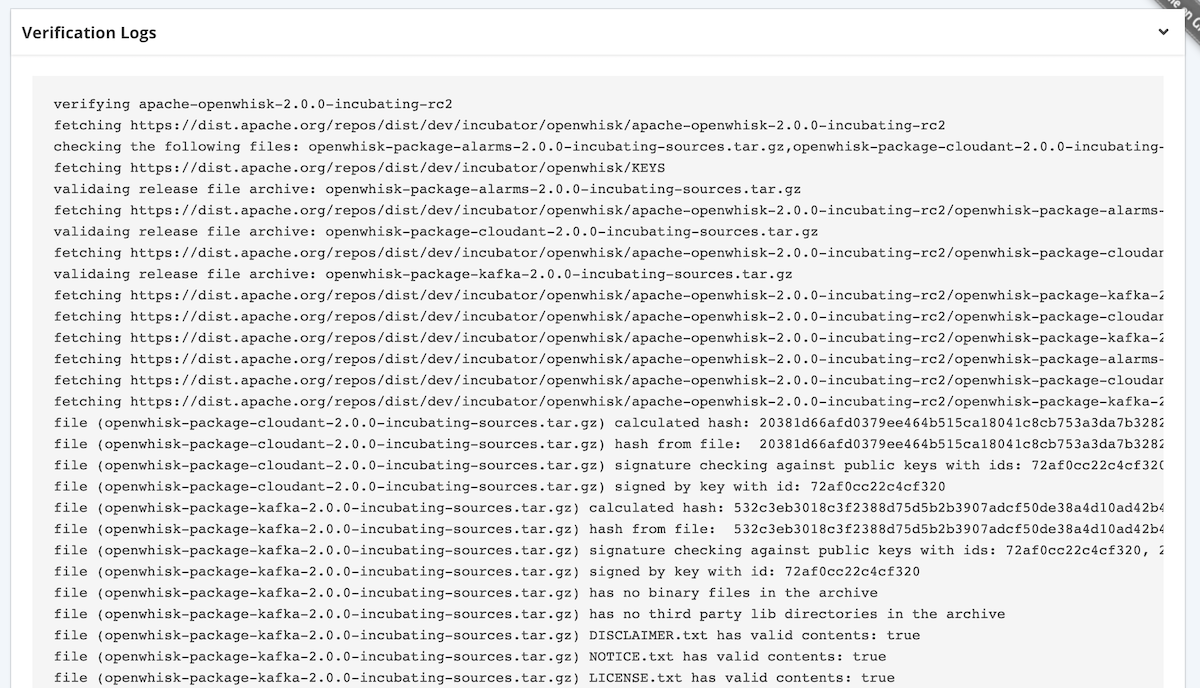

capturing validation logs

It is important to provide PMC members with verifiable logs on the validation steps performed. This allows them to sanity check the steps performed (including manual validation). This verification text can also be provided in the voting emails as evidence of release candidate validity.

Using a custom logging library, all debug logs sent to the console are recorded in the action result (and therefore returned in the API response).

showing results

Once all the validation tasks have been executed - the results are returned to the front-end as a JSON response. The client-side JS parses these results and updates the validation table. Validation logs are shown in a collapsible window.

Using visual emojis for pass and failure indicators for each step - the user can easily verify whether a release passes the validation checks. If any of the steps have failed, the validation logs provide an opportunity to understand why.

other tools

This is not the only tool that can automate checks needed to validate Apache Software Foundation releases.

Another community member has also built a bash script (rcverify.sh) that can verify releases on your local machine. This script will automatically download the release candidate files and run many of the same validation tasks as the remote tool locally.

There is also an existing tool (Apache Rat) from another project that provides a Java-based application for auditing license headers in source files.

conclusion

Getting new product releases published for an open-source project under the ASF is not a simple task for developers used to pushing a button on Github! The ASF has a series of strict guidelines on what constitutes a release and the ratification process from PMC members. PMC members need to run a series of manual verification tasks before casting binding votes on proposed release candidates.

This can be a time-consuming task for PMC members on a project like Apache OpenWhisk, with 52 different project repositories all being released at different intervals. In an effort to improve my own productivity around this process, I started looking for ways to automate the verification tasks. This would enable me to participate in more votes and be a “better” PMC member.

This led to building a serverless web application to run all the verification tasks remotely, which is now hosted at https://apache.jamesthom.as. This tool uses Apache OpenWhisk (provided by IBM Cloud Functions), which means the project is being used to verify future releases of itself! I’ve also open-sourced the code to provide an example of how to use the platform for automating tasks like this.

With this tool and others listed above, verifying new Apache OpenWhisk releases has never been easier!